Most AI agents break when they hit a language boundary. They hallucinate translations, drift register, or invent content that was never in the source. For production pipelines processing documents in dozens of languages without human review, that failure mode is not acceptable. That’s exactly the problem Scale AI’s new MCP Atlas benchmark was built to surface, and it’s why Lara Translate is in it.

If you work in AI, you know Scale AI. The company behind some of the most demanding data labeling, model evaluation, and AI testing infrastructure in the industry has built something new: MCP Atlas, the first comprehensive benchmark dedicated to testing how well AI models use external tools. Lara Translate is in it as the dedicated translation MCP server.

|

TL;DR

|

Quick Answer

Scale AI’s MCP Atlas, a benchmark testing AI agent tool used across 1,000 real-world tasks and 36 MCP servers, includes Lara Translate as its translation tool. It’s an independent technical validation that Lara Translate’s MCP server meets the reliability bar for production-grade agentic workflows.

Why It Matters

AI agents are moving from demos into production pipelines. When they do, translation stops being a convenience feature and becomes infrastructure. An agent that hallucinates a contract clause, drifts register in a support email, or mistranslates a product name at scale creates liability, not just noise. Lara Translate’s inclusion in the MCP Atlas is Scale AI’s signal that one translation tool meets the quality bar for these workflows. For teams evaluating which translation API to build on, that benchmark carries real due diligence weight.

What Is MCP Atlas?

To understand why this matters, it helps to know what MCP Atlas actually is, and why it’s different from any other AI benchmark you’ve seen.

Most AI benchmarks test what a model knows: can it answer a trivia question, write a poem, solve a math problem? MCP Atlas tests something harder and more commercially relevant: can a model act?

The Model Context Protocol (MCP) is the open standard launched by Anthropic that lets AI models like Claude, ChatGPT, and Llama connect to external tools and data sources in a standardized way. Think of it as the USB-C of AI integrations: one protocol, every tool. MCP Atlas is Scale AI’s benchmark for evaluating exactly how well AI agents navigate this ecosystem.

It includes 36 carefully selected MCP servers, 1,000 human-authored evaluation tasks (500 publicly available, 500 held out to preserve leaderboard integrity), and a scoring methodology that measures whether AI agents can call the right tools, pass the correct parameters, and complete complex multi-step workflows reliably. Tasks run against real MCP servers in containerized Docker environments, not mocked APIs or simulations, which means models face actual latency, real error messages, and genuine data formats.

This isn’t a theoretical exercise. It’s how enterprise AI capability is measured.

Why Lara Translate Was Selected

Scale AI doesn’t add tools to MCP Atlas arbitrarily. The benchmark runs against real MCP servers in containerized environments, not mocked APIs, which means every included tool needs stable, well-documented endpoints that behave consistently under benchmark conditions. Tools also need to represent a genuine business use case: something enterprise agents actually need to do in production.

Translation fits that description exactly. It’s one of the most frequently requested capabilities in agentic AI workflows, from customer support to document processing to multilingual content generation. Lara Translate’s MCP server is the tool Scale AI uses for translation tasks in the benchmark.

What “Agent-Ready” Translation Actually Means

Most translation tools assume a human is checking the output. That’s fine for a freelancer workflow. It’s a problem the moment your AI agent needs to translate 10,000 product descriptions overnight with no one in the loop.

Human-facing translation tools are optimized for UI, review workflows, and manual correction. Agent-facing translation tools need something different: precise API responses, predictable behavior, zero hallucination, and the ability to handle domain-specific context at scale without a human in the loop to catch errors.

Lara Translate was built from the ground up on a proprietary Domain-Specific Language Model (DSLM), trained exclusively on professional translation data. Unlike general-purpose LLMs that treat translation as one of thousands of tasks, Lara Translate is architecturally constrained to translate, and only to translate. This eliminates the content fabrication and meaning distortion that plague generic AI translation, and it’s precisely what makes Lara Translate reliable enough for Scale AI to use in benchmarking the most advanced AI models in the world.

Try Lara Translate in your own agent workflow

See how Lara Translate handles domain context, terminology, and format precision without fabricating content.

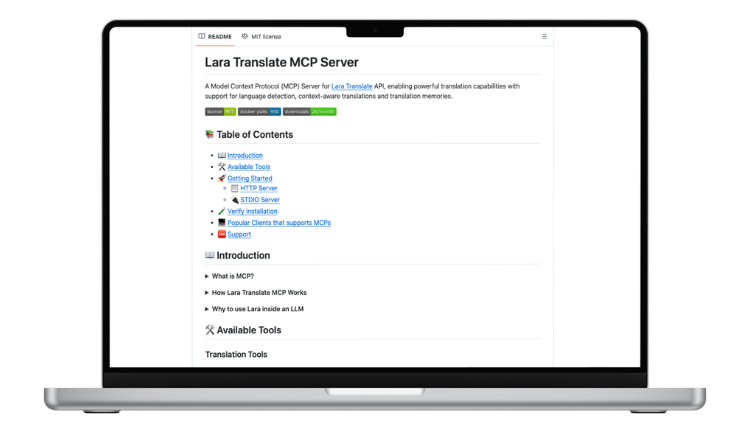

Technical Deep-Dive: How the Lara MCP Server Works

For developers building agentic workflows, here’s what the Lara MCP server actually exposes and why it’s designed for machine consumption.

The translate Tool

The core of the Lara MCP server is the translate tool. When an AI agent calls it, the tool accepts an array of text blocks alongside several optional parameters that give fine-grained control over the output:

text: an array of blocks, each with a string and atranslatableboolean. This lets agents pass mixed content (a template with translatable copy and untranslatable tokens like product names, UUIDs, or code snippets) in a single request without preprocessing.target: the ISO language code for the output (e.g.en-US,fr-FR,zh-TW).source: optional; if omitted, Lara Translate uses automatic language detection.context: a free-text string where the agent can inject domain information (e.g., “This is a legal contract”, “The user is a tennis coach”). This is what enables context-aware translation: the same source sentence can yield semantically different outputs depending on the domain passed here.instructions: an array of custom rules (e.g.["Use formal register", "Do not translate brand names"]).source_hint: an optional hint to improve language detection accuracy on short or ambiguous strings.

This level of structured input is what makes the Lara MCP server genuinely useful for agents, rather than just wrapping a simple translate endpoint. Agents can express nuance. The API responds with deterministic precision. Full parameter reference is available in the Lara Developer Hub.

Translation Memories Inside Agent Workflows

One of the most powerful and underused features of the Lara MCP server is its Translation Memory (TM) management suite. This matters enormously for enterprise agentic workflows.

Translation Memories let you teach Lara Translate your brand’s terminology once, then have it apply that knowledge consistently across every translation, in every language, in every future agent run. That’s the whole point of TMs: consistency that scales without manual intervention.

The MCP server exposes a full set of TM tools:

list_memories/create_memory/update_memory/delete_memory: full lifecycle management of your TM library directly from the agent- Append or remove individual translation units via

add_translation/delete_translation, with optional contextual sentences for higher-precision recall - Bulk-importing from existing platforms? Use

import_tmxto load industry-standard TMX files directly check_import_statuslets you monitor large TMX imports asynchronously, so the agent doesn’t block while waiting

For teams already running CAT tools or localization platforms, this means your existing translation assets don’t disappear when you move to an agentic workflow. They travel with you. See the Translation Memory documentation for setup guidance.

Why the DSLM Architecture Matters for Agents

When a general-purpose LLM handles translation inside an agent workflow, it faces a structural problem: it’s been trained to be helpful, which means it will fill gaps, interpret ambiguity charitably, and sometimes fabricate content to produce a fluent output. In a conversational context, that’s fine. In an autonomous pipeline where no human reviews the output, it’s a liability.

Lara Translate’s architecture operates under strict input-output constraints. It is architecturally forced to stick to the source text. No filler. No invented content. No register drift. This is what Scale AI’s benchmark validates, and it’s what makes Lara Translate a reliable building block for agentic systems that need to scale without supervision.

For a fuller breakdown of how Lara Translate’s DSLM compares to general-purpose models on real translation tasks, see our research post on the Lara Translate blog.

Why This Is a Milestone for AI Translation

The AI agent ecosystem is still in its early stages, but the trajectory is clear: within the next few years, a significant portion of knowledge work will be executed by AI agents operating autonomously across tools, data sources, and workflows. Translation is not a peripheral use case in this world. It’s infrastructure. Every multinational enterprise deploying agents will need those agents to communicate across languages accurately and reliably.

The AI agent ecosystem is still in its early stages, but the trajectory is clear: within the next few years, a significant portion of knowledge work will be executed by AI agents operating autonomously across tools, data sources, and workflows. Translation is not a peripheral use case in this world. It’s infrastructure. Every multinational enterprise deploying agents will need those agents to communicate across languages accurately and reliably.

Being included in MCP Atlas is meaningful external validation. Scale AI built the benchmark to expose exactly where agents fail at real-world tool use, and Lara Translate’s MCP server is one of the 36 tools that benchmark runs against. For teams evaluating AI translation tools, that’s a useful signal: an independent organization with serious AI evaluation credentials chose this implementation as the translation layer for their testing infrastructure.

It’s not a product endorsement. It’s something arguably more useful: a technical selection made by people who needed something that wouldn’t break under pressure.

Get Started

Ready to add reliable multilingual output to your agent stack? Here’s where to start:

- Explore the Lara MCP server → github.com/translated/lara-mcp

- Read the full API documentation → developers.laratranslate.com

- Browse the MCP Atlas benchmark → github.com/scaleapi/mcp-atlas

- Create a free Lara Translate account → laratranslate.com

Build with the translation API that doesn’t break under pressure

Connect the Lara Translate MCP server to your agent stack and get context-aware, hallucination-free translation across 200+ languages.

FAQ

What is MCP Atlas?

MCP Atlas is a benchmark published by Scale AI that evaluates how well AI language models use external tools through the Model Context Protocol (MCP). It contains 1,000 human-authored tasks across 36 real MCP servers and 220 tools, requiring models to discover the right tool, call it with correct parameters, and complete multi-step workflows reliably. Tasks run against real servers in containerized environments, not simulations. The leaderboard is available at labs.scale.com/leaderboard/mcp_atlas.

What is the Model Context Protocol (MCP)?

MCP is an open standard launched by Anthropic that lets AI models connect to external tools and data sources in a standardized way. Instead of every AI company building custom integrations for every tool, MCP creates one protocol that any conforming tool can plug into. Lara Translate’s MCP server follows this standard, which means it works with Claude, ChatGPT, and any other MCP-compatible agent out of the box.

Is the Lara Translate MCP server free to use?

The Lara MCP server is open-source and available on GitHub at github.com/translated/lara-mcp. Using it requires a Lara Translate API key. A free tier is available. See the Lara API free plan details for current limits.

How is Lara Translate different from using an LLM for translation inside an agent?

General-purpose LLMs are trained to be helpful, which means they fill gaps and sometimes fabricate content to produce fluent output. In an autonomous pipeline with no human review, that’s a reliability problem. Not a minor one. Lara Translate runs on a Domain-Specific Language Model (DSLM) trained exclusively on professional translation data. It is architecturally constrained to translate the source text and only the source text: no invented content, no register drift, no hallucinated filler.

Does the Lara MCP server support Translation Memories?

Yes. The Lara MCP server exposes a full Translation Memory management suite: you can create, update, and delete TM libraries; add or remove individual translation units; bulk-import existing TM data via the industry-standard TMX format; and monitor the progress of large imports asynchronously. Your existing localization assets carry over when you move to an agentic workflow. They don’t disappear at the migration boundary.

Lara Translate is developed by Translated, a global leader in AI-based language solutions with over 25 years of experience in professional translation.

This article covers:

- What MCP Atlas is and how Scale AI designed it

- Why Lara Translate is included as the benchmark’s translation tool

- What “agent-ready” translation actually requires, and why it differs from human-facing tools

- A technical breakdown of the Lara MCP server: the

translatetool, Translation Memory management, and DSLM architecture - Why this inclusion matters for teams evaluating AI translation infrastructure