Most businesses start with a general-purpose AI and quickly hit a wall. The answers are vague, the jargon is wrong, and someone still has to check everything. The problem is not the AI — it is the fit. Domain-specific Language Model for business is built to close that gap, and for translation-heavy teams, the difference is not marginal. It is the difference between a draft and a deliverable.

|

TL;DR

|

Why Domain-Specific Language Model for business solves the generalist problem

When AI tools first started appearing in business workflows, the promise was appealing: one powerful model that could write emails, analyze data, answer customer questions, and draft contracts — all from a single interface.

Large Language Models (LLMs) like ChatGPT, Claude or Gemini are genuinely impressive. They have been trained on an enormous volume of text from across the internet, books, and scientific papers. Ask them anything and they will give you an answer.

But here is the problem with a tool that knows a little about everything: it often does not know enough about what actually matters to your business.

Think of a general LLM as a well-read generalist. They can hold a conversation about medicine, law, marketing, and software engineering. But would you trust them to handle a sensitive client negotiation in your industry? To interpret a niche regulatory document? To understand the specific terminology your team uses internally?

Probably not — at least not without significant hand-holding.

This is the core challenge with general-purpose LLMs in a business context. Trained broadly, they spread their knowledge thin. They do not know your industry’s jargon, your company’s processes, or the nuances that separate a good answer from the right answer in your field.

There is also the reliability issue. General-purpose models are known to hallucinate — confidently producing information that sounds correct but is factually wrong. In a casual setting, that is annoying. In a business setting, it can be costly, embarrassing, or legally risky.

And then there is cost. Large general-purpose models require significant computing resources. Every query you send to a massive LLM is more expensive than it needs to be — especially when you only need answers within a specific domain.

What is a domain-specific language model (DSLM)?

A domain-specific language model (DSLM) is an AI model trained on data from a specific industry or function rather than the open web. Instead of knowing a little about everything, it knows a lot about one domain — which makes it faster, more accurate, and cheaper to run than a general-purpose LLM for targeted tasks.

The result is an AI that does not just speak your language — it thinks in it.

According to Gartner analysts, DSLMs can deliver up to four times more efficiency than general-purpose LLMs in terms of cost and response speed (Gartner, “Predicts 2024: AI”). The same research predicts that more than 60% of generative AI used by enterprise businesses will be domain-specific by 2028. This is not a niche trend — it is the direction the entire industry is heading.

DSLM vs LLM: a direct comparison

| General LLM | Domain-Specific LLM (DSLM) | |

|---|---|---|

| Training data | Open web, broad sources | Curated, industry-specific data |

| Accuracy in domain | Variable, often requires correction | High, contextually precise |

| Hallucination risk | Higher | Significantly reduced |

| Cost per query | Higher (large model overhead) | Lower (smaller, focused model) |

| Data governance | Often public cloud | Can be scoped to your environment |

| Onboarding speed | Requires heavy prompting | Understands context out of the box |

Here is why DSLMs consistently outperform general LLMs for real business use:

Accuracy where it counts. Because a DSLM is trained on domain-specific data, it produces answers that are far more precise and contextually relevant. It understands subtleties that a general model would miss or misinterpret.

Fewer hallucinations. By grounding the model in curated, industry-relevant knowledge, DSLMs dramatically reduce the risk of the AI confidently producing incorrect output — a critical advantage in any high-stakes business environment.

Lower costs and faster responses. DSLMs are typically smaller and more focused than general LLMs, which means they are cheaper to run and faster to respond. You are not paying for knowledge you do not need.

Better compliance and data governance. General-purpose LLMs often rely on public cloud infrastructure, which raises concerns about where your business data goes. DSLMs can be built to keep sensitive information within your organization’s boundaries — a major advantage for industries with strict regulatory requirements.

Alignment with your business. A DSLM can be shaped around your specific processes, internal data, and operational needs in a way that a general model cannot replicate.

How Lara Translate applies domain-specific AI to translation

Lara Translate is not a general-purpose AI trying to cover every use case. It is purpose-built for translation workflows, trained on linguistic data and optimized for accuracy across 70+ file formats. That means your team gets translations that preserve context, tone, and terminology — not just words swapped between languages.

Try Lara Translate in your own workflow

Test Lara Translate on a real client text and see how it handles your terminology, context, and formatting.

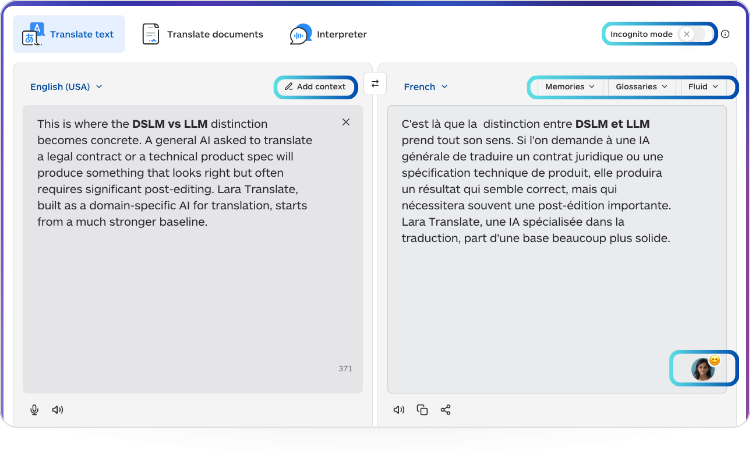

This is where the DSLM vs LLM distinction becomes concrete. A general AI asked to translate a legal contract or a technical product spec will produce something that looks right but often requires significant post-editing. Lara Translate, built as a domain-specific AI for translation, starts from a much stronger baseline.

Day-to-day, this translates into real advantages:

Your team gets output they can actually use. Not generic translations that require extensive editing or fact-checking — output that is contextually accurate from the first draft. You can guide Lara Translate further with context and instructions to match your specific use case.

Terminology stays consistent. With glossaries and translation memories, Lara Translate applies your preferred terms every time — critical for regulated industries, brand-sensitive content, or technical documentation.

Your data stays where it belongs. Incognito Mode ensures your documents are not used to train any model, giving you the data governance that public-cloud general LLMs cannot offer.

Translation styles match your content. Lara Translate’s translation styles let you choose how output is calibrated — from faithful/literal to more adaptive approaches — depending on whether you are translating a contract, a marketing campaign, or a support article.

Human review is available when stakes are high. For content where accuracy is non-negotiable, you can add a human review layer on top of AI translation without leaving the platform.

There is also a competitive dimension worth considering. Businesses that deploy AI built for their specific context will outpace those relying on generic tools. The advantage is not just efficiency — it is the quality of decisions, the speed of execution, and the confidence that comes from knowing your AI is working with your expertise, not around it.

Try Lara Translate in your own workflow

Test Lara Translate on a real client text and see how it handles your terminology, context, and formatting.

The bottom line

The era of “one model for everything” is giving way to something smarter: domain-specific AI for business designed to excel in a specific context. For businesses, this shift is not about keeping up with technology trends — it is about choosing the right tool for the job.

A general LLM is broad by design. Useful for exploration, but not what you reach for when precision matters. A domain-specific language model like Lara Translate is built to be a precision instrument — one that understands your workflows, reduces the noise, and helps your team do better work, not just faster work.

Because in business, the AI that knows your world will always outperform the one that knows everything about nothing in particular.

|

Why it matters Businesses adopting general AI tools for specialized workflows accept a hidden tax: time spent correcting outputs, second-guessing accuracy, and re-prompting for context the model never had. Domain-specific AI eliminates that friction at the source. For translation in particular — where a wrong term in a contract, product listing, or support article has direct commercial and legal consequences — the gap between a general LLM and a purpose-built tool like Lara Translate is not a feature difference. It is the difference between a draft and something you can actually ship. |

FAQ

What is a domain-specific language model? A domain-specific language model (DSLM) is an AI model trained on data from a specific industry or business function, rather than broad internet data. It delivers more accurate, relevant, and cost-efficient results than a general-purpose LLM when used within its target domain.

Is domain-specific AI better than a general LLM for business? For most business workflows — especially those involving specialized terminology, compliance requirements, or sensitive data — yes. DSLMs reduce hallucination rates, lower per-query costs, and require less prompting to produce usable output.

How is Lara Translate different from general AI translation tools? Lara Translate is purpose-built for translation workflows, with support for glossaries, translation memories, 70+ file formats, multiple translation styles, and Incognito Mode for data privacy. It is designed as domain-specific AI for translation, not a general chatbot asked to translate.

This article is about

- What domain-specific AI for business is and how it differs from general-purpose LLMs

- Why general LLMs hallucinate more and cost more in specialized workflows

- How to compare DSLM vs LLM across accuracy, cost, and data governance

- How Lara Translate applies domain-specific AI to translation workflows

- Key features that reduce post-editing: glossaries, translation memories, and Incognito Mode

- When to add human review on top of AI translation for high-stakes content

Useful articles: